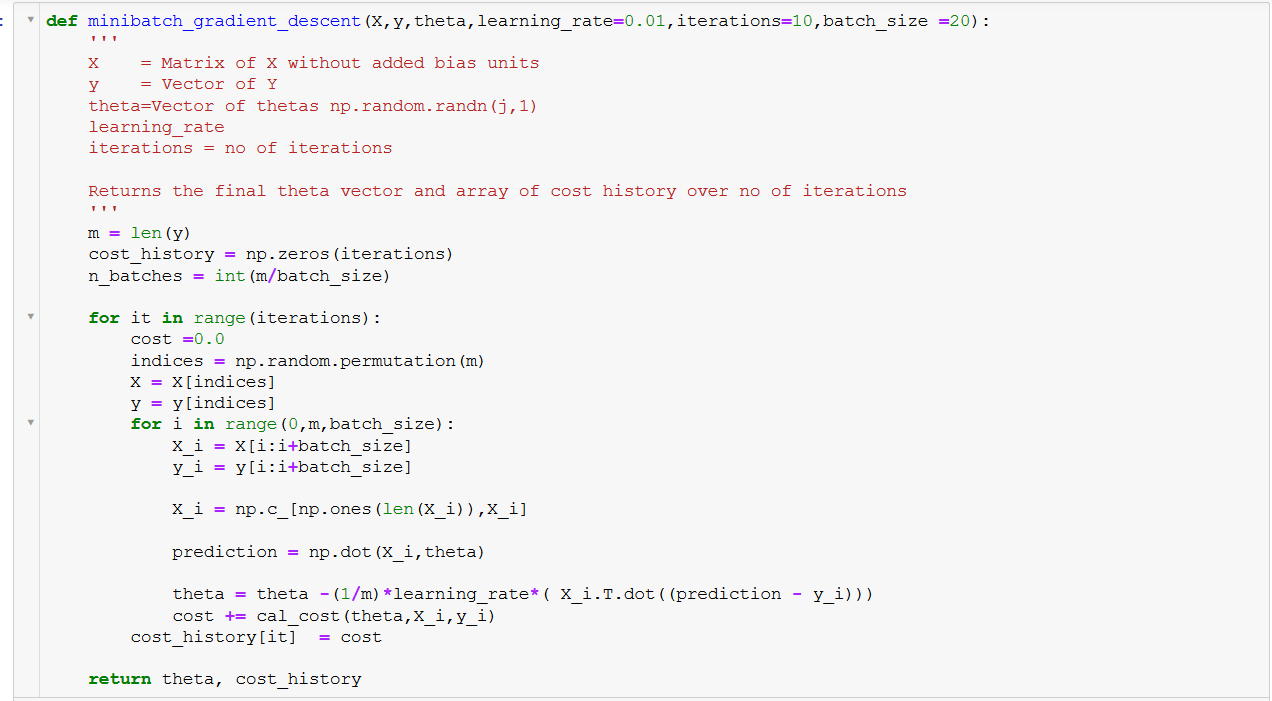

The Gradient descent algorithm multiplies the gradient by a number (Learning rate or Step size) to determine the next point.įor example: having a gradient with a magnitude of 4.2 and a learning rate of 0.01, then the gradient descent algorithm will pick the next point 0.042 away from the previous point. Like we said, the gradient is a vector-valued function, and as a vector, it has both a direction and a magnitude. The lowest point in the mountain is the value -or weights- where the cost of the function reached its minimum (the parameters where our model presents more accuracy). The chosen direction is where the cost function reduces (the opposite direction of the gradient). The mountain is the data plotted in a space, the size of the step you move is the learning rate, feeling the incline around you and decide which is higher is calculating the gradient of a set of parameter values, which is done iteratively. Its goal is to reach the lowest point of the mountain. That is exactly what Gradient Descent does. You do this iteratively, moving one step at a time until finally reach the bottom of the mountain. How can you come with a solution? Well, you will have to take small steps around and move towards the direction of the higher incline. Your goal is to reach the bottom field, but there is a problem: you are blind. It is a derivative that indicates the incline or the slope of the cost function.Īt this point, I was completely lost, but what always helps me is to graphically imagine a problem, so imagine you are in the top of a mountain. What is a Gradient?Ī gradient is a vector-valued function that represents the slope of the tangent of the graph of the function, pointing the direction of the greatest rate of increase of the function. Gradient Descent does this by iteratively moves toward a set of parameter values that minimize the function, taking steps in the opposite direction of the gradient.

In simple words, Gradient Descent finds the parameters that minimize the cost function (error in prediction).

It is an optimization algorithm used in training a model.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed